Today I would like to take the opportunity to write about inverse square law. What is inverse square law and why it’s important for us as Wi-Fi Engineers to understand. Its important to understand that how RF behaves when distance increases.

I will start with light example which is easy to understand, and it work same way as RF.

Characteristics of the light is when it comes out of the source the intensity will start decreasing very fast.

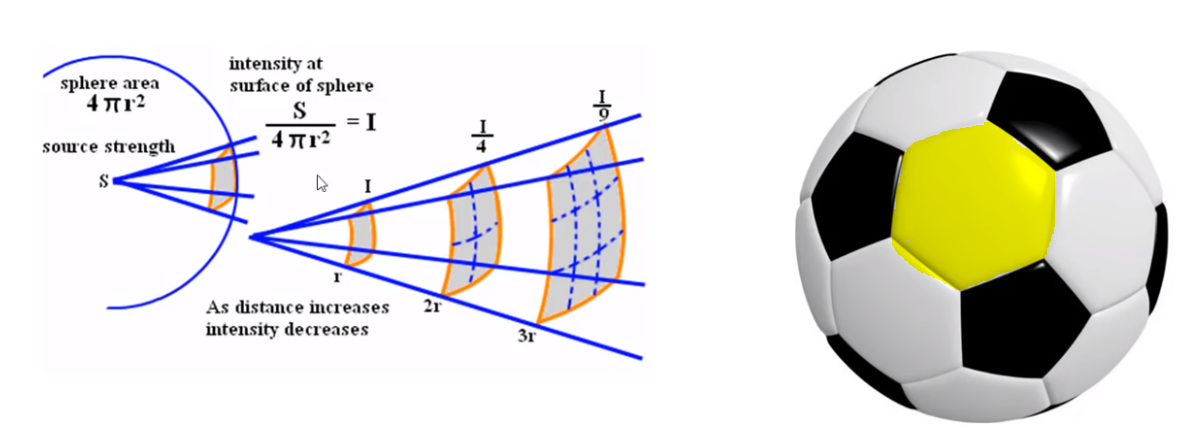

Let’s suppose we place a light bulb or say antenna in WLAN scenario and want to test intensity of signal or light on the one cell of the football highlighted in yellow.

Area of football (Square) 4 π r2.

S = source of light

if you want to measure the intensity of the light on the square of the football where area of the sphere is 4pi square.

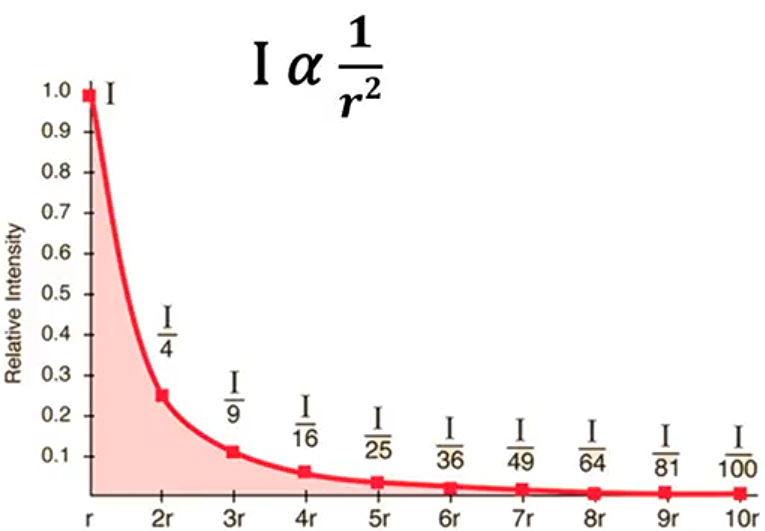

Formula to measure intensity i= s/4pi r square. From the formula we can understand that if radius will increase intensity will decrease. Let’s suppose radius is 1 intensity is 1 but if radius is 2 intensity is quarter ¼. So, if you go away from the source 3 times then intensity will drop by 1/9.

Same affect can be seen the example below where light is focusing towards eggs from a source and by changing the distance the intensity of the light decreases gradually.

So, if keep going away from the source intensity will drop dramatically.

If radius is 1 then intensity 100% and if you go double the distance, then intensity will drop to 25% and will keep dropping.

Now we look at butter gun example, we shoot with butter on the toast from 1 foot or meter and it fill the toast with butter. Now if we double the distance, we can see that radius will increase with same amount of butter on 4 toasts but layer off the butter will be much less than the half of the distance.

This does not only apply to visible light but happens to entire electromagnetic spectrum. Radio wave micro wave, infrared all holds inverse square law.

It’s as simple as that. What we haven’t discussed in depth is the relationship between distance and the received power of RF waves, which is also simple, but less intuitive. Once you understand just how dramatically distance impacts reception, you’ll be fighting for every inch.

As radio waves travel outward from their source – a transmitter – they lose intensity, and fast. The intensity of radio waves over distance obeys the inverse-square law, which states that intensity is inversely proportional to the square of the distance from a source.

Think of it this way: double the distance, and you get four times less power. This works the other way around, too, and is crucial to the point we’re trying to get across here: halve the distance, and received power is increased four times over. So, while you can’t always have the freedom to move a receiver as close as you want, shortening the distance by just a few inches can counterintuitively produce dramatically better signal, because of the inverse-square relationship.

Though intensity drops very fast still radio waves can travel far which can cause ACI and CCI. Only way to reduce ACI and CCI by using the correct design, location, antenna types, and power.

Note: On the topic of intensity lets talk about 2.4Ghz vs 5Ghz. We often hear that 5Ghz travel less then 2.4Ghz ? is it really true? I am afraid its not.

5Ghz will travel the same distance as 2.4Ghz. Today you fire 2.4Ghz and 5Ghz in vacuum towards Mars and both will reach there.

Here is another factor to consider as well, 5GHz has a shorter wavelength but its intensity is greater than 2.4GHz. This is because 5GHz signals have more energy per photon than 2.4GHz signals. The energy of a photon is directly proportional to its frequency, so the higher the frequency, the more energy each photon has. so then why 5GHz less range as compared to 2.4GHz?

The shorter wavelength of 5GHz signals can also cause them to be diffracted and attenuated more easily than 2.4GHz signals. This is because the shorter wavelength means that the waves are more susceptible to interference from obstacles and other objects in the environment. As a result, 5GHz signals may not travel as far as 2.4GHz signals, and they may be more prone to interference.

Obstacles: 2.4GHz signals are better able to penetrate obstacles, such as walls and furniture than 5GHz signals. This is because the longer wavelength of 2.4GHz signals allows them to diffract around obstacles more easily.

Distance: 2.4GHz signals are able to travel farther than 5GHz signals. This is because the longer wavelength of 2.4GHz signals allows them to lose less energy as they travel through the air.

Pingback:Why Your External Monitor Is Slowing Down Your Wi-Fi (+Fix) - Happily Tech

Pingback:The Checklist – Pete's WiFi Page

Bookmarked, so I can continuously check on new posts! If you need some details about Podcasting, you might want to take a look at UY3 Keep on posting!